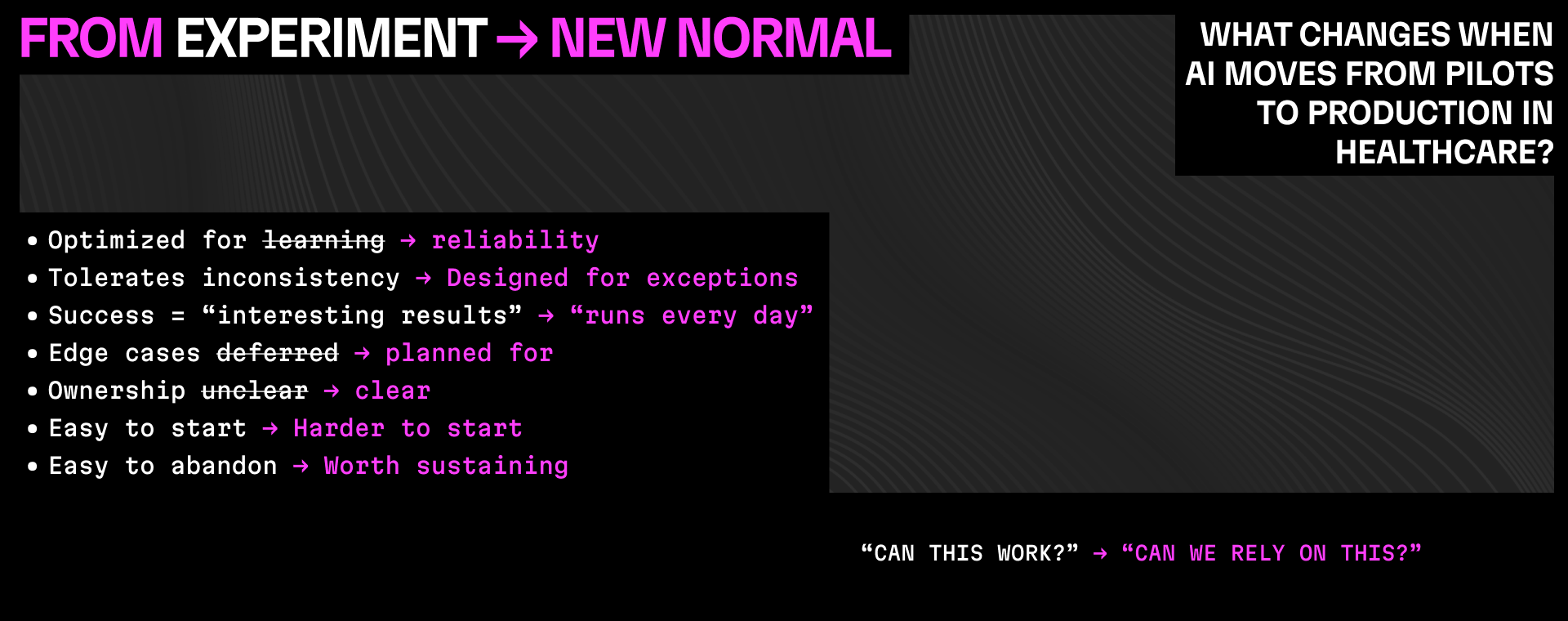

From experiment to new normal: what we look for before AI belongs in production

Let’s be honest, at Synthpop, we didn’t arrive at this framework fully formed.

Like many teams building with AI in healthcare, some of our deployments showed real promise right away – and then took much, much longer than expected to make it all the way to production. Not because the technology failed outright, but because we underestimated what it takes for AI to operate reliably inside real healthcare workflows.

Those moments were uncomfortable.

They were also formative.

They forced us to separate what works in theory from what holds up in practice – across volume, exceptions, regulations, and human behavior.

This framework is the result of that learning.

It reflects patterns we’ve seen not only across customer environments, but in our own work as well. Over time, it became clear that the difference between an AI experiment and a new normal had very little to do with model capability – and everything to do with how systems are designed to operate once the pilot ends.

Experiments prove capability. The new normal proves reliability.

Experiments are about possibility.

They answer questions like:

• Can AI perform this task?

• Can it integrate with our environment?

• Can it reduce effort in a controlled setting?

Those are necessary questions. But they stop short of what healthcare actually requires.

The new normal begins when the expectation changes from “Can this work?” to “Can this run, consistently, as part of care delivery?”

That shift demands a different, higher standard.

What changes when AI moves into production

The Experiment vs. New normal framework captures the patterns we see most often when AI efforts either stall – or successfully cross into production.

The unit of value shifts:

In experiments, value is measured at the task level.

In production, value is measured across the patient journey.

If automation improves one step but creates delays or handoffs downstream, it doesn’t hold in the real world.

Responsibility becomes explicit:

Pilots often operate with implicit ownership.

Production systems require named accountability, escalation paths, and clear decision boundaries.

If something breaks, it must be immediately clear who is responsible – and what happens next.

Humans are intentionally in the loop:

Production AI is not autonomous by default.

Humans are part of the design – not as backstops, but as operators who understand when AI should act, when it should pause, and when judgment must take over.

Failure is assumed, not avoided:

Healthcare workflows are full of exceptions.

Systems that only work under ideal conditions fail quietly in production. Systems designed for the new normal assume variability – and surface issues early and visibly.

Success is operational, not technical:

In experiments, success is often model accuracy or task completion.

In production, success is measured in time to treatment, backlog reduction, staff capacity, and consistency over time.

If those outcomes aren’t improving, the system isn’t ready.

Why pilots often stall here

Most pilots don’t fail because AI is incapable.

They stall because experimental systems are asked to behave like infrastructure – without being designed that way.

Governance is unclear.

Ownership is diffuse.

Edge cases are ignored until they become problems.

The result is hesitation – not because leaders are skeptical of AI, but because they don’t yet have something they can depend on.

How we use this framework

We don’t use this framework to judge past decisions.

We use it to make the next decision clearer.

It helps organizations understand:

• where an AI effort sits today

• what assumptions are still experimental

• what would need to change for it to become operational

In many cases, the answer isn’t “replace the technology.”

It’s “redesign how it’s deployed.”

Why this matters now

Healthcare doesn’t need more pilots that never graduate.

It needs AI systems that are designed – from the start – to operate in the real world.

The difference between experimentation and the new normal isn’t ambition or intelligence.

It’s operational discipline.

That discipline is what allows AI to stop being a project – and start becoming infrastructure.